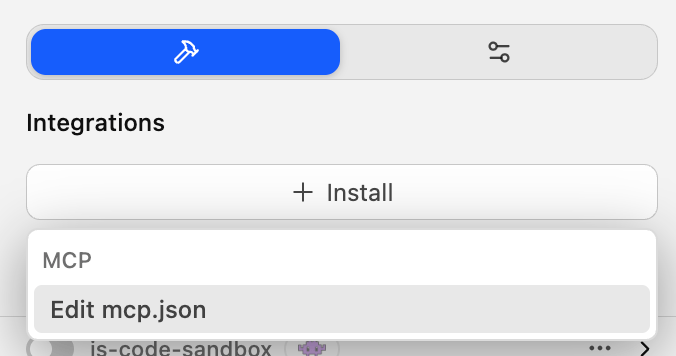

To add an MCP server to OpenCode, just follow these steps:

- Open

opencode.jsonconfig file for editing, e.g.nano .config/opencode/opencode.jsonon macOS. - Add the following section:

"mcp": { "my-remote-mcp": { "type": "remote", "url": "https://my-mcp-server.com", "enabled": true, "headers": { "Authorization": "Bearer MY_API_KEY" } } }

If opencode.json already has data in it, make sure to paste the above after schema and to add a , after the last closing braces, or opencode will error out.

Sources: